AI-driven Web-based Ancillary Lab Assistant | UNO Q & Gemini

May 10, 2026

Based on Arduino UNO Q, this lab assistant is able to show real-time sensor readings, identify users by fingerprint, recognize lab equipment via object detection, generate AI lessons via Gemini, and talk through its full-fledged web dashboard.

Keywords

Hardware

- 1Arduino UNO Q 4GB

- 1UGREEN 5-in-1 100W USB-C Hub with 4K@60Hz HDMI and 3 * USB-A 3.0

- 1A4 Tech PK-910H USB Webcam (1080p)

- 1Raspberry Pi 15W 5.1V / 3.0A USB-C Power Supply

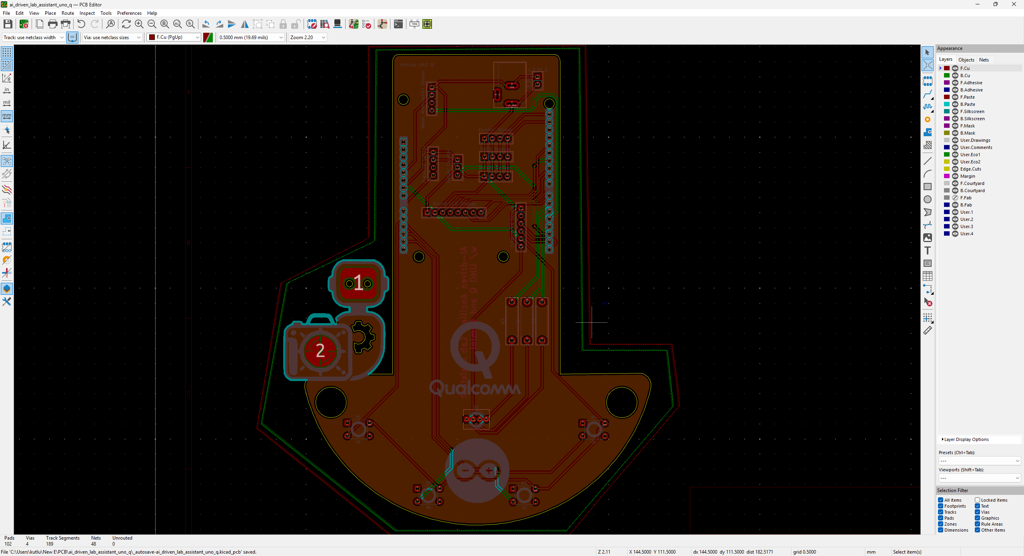

- 1Custom PCB

- 1DFRobot Capacitive Fingerprint Sensor (UART)

- 1DFRobot Gravity: Electrochemical Alcohol Sensor

- 1DFRobot Gravity: 1Kg Weight Sensor Kit - HX711

- 1DFRobot Gravity: Geiger Counter Module

- 1DFRobot Gravity: Electrochemical Nitrogen Dioxide Sensor

- 1Seeed Studio Grove: Integrated Pressure Sensor Kit (MPX5700AP)

- 1Seeed Studio Grove: Water Atomization Sensor (Ultrasonic)

- 1DFRobot Gravity: GNSS Positioning Module

- 1Waveshare 1.28" Round LCD Display Module (GC9A01)

- 4Button (6x6)

- 15 mm Common Anode RGB LED

- 3220Ω Resistor

- 160 mm Petri Dish

- 1M2 Screws, Nuts, and Washers

- 1USB Buck-Boost Converter Board

- 1Jumper Wires

- 1Bambu Lab A1 Combo

Software

Description

After hearing about the launch of the brand-new Arduino UNO Q, designed as the first SBC (single-board computer) with Arduino's philosophy of bridging the gap between employing professional development tools and implementing them as novices when creating introductory projects or as experts while prototyping complex mechanisms rapidly yet stably, I thought it would be a great opportunity to redesign my previous AI-driven lab assistant project and enable more developers, beginner or expert, to replicate, experiment, or improve this new AI-based ancillary lab assistant thanks to the built-in Arduino UNO Q features and its beginner-friendly development platform — Arduino App Lab.

As you may know, if you have read one of my previous project tutorials, I prefer building my AIoT projects on the target development boards and environments from scratch and enjoy developing unique methods, applications, and mechanisms to collect custom training data and achieve intended device features, strictly following my methodology of developing proof-of-concept research projects. Nonetheless, in this project, I heavily focused on developing all lab assistant features based on the provided UNO Q and Arduino App Lab characteristics, such as the built-in Bricks, native microprocessor-microcontroller communication procedure, and Linux-oriented SBC board architecture, to ensure that anyone with a UNO Q can effortlessly replicate and examine this lab assistant without needing to have a deep understanding of all aspects of this project; coding, web design, neural network training, LLM-implementation, 3D modeling, etc. In this regard, I hope this project serves as an entry point for developing research projects, encouraging readers to reverse-engineer the features of this AI-driven lab assistant to gain a deeper understanding of AIoT development on the edge.

As I was taking inspiration from my previous lab assistant project, I heavily modified the device structure and added a lot of new features specific to this iteration, for instance, designing a unique PCB (UNO Q shield) for utilizing various lab sensors to conduct LLM-assisted basic lab experiments. After months of hard work, I managed to complete the reimagined AI-driven ancillary lab assistant structure and develop all the features I envisioned on UNO Q by solely employing the Arduino App Lab development environment, providing foundational building blocks (Bricks).

🤖 To build the ancillary lab assistant structure:

✍🏻 I designed a unique PCB as a UNO Q shield (hat) to connect the selected lab sensors and create the analog lab assistant interface, including the capacitive fingerprint sensor.

✍🏻 Then, I modeled 3D parts to design the ancillary lab assistant base, containing the USB camera and the analog interface.

✍🏻 Finally, I designed a modular lab sensor ladder, organizing all sensors and secondary experiment tools, to create a compact but easy-to-use instrument.

🤖 To accomplish all of the ancillary lab assistant features I contemplated, performed by an Arduino App Lab application:

🛠️ I trained an Edge Impulse object detection model to identify various lab equipment.

🛠️ I programmed the MCU (STM32) to collect real-time sensor information and manage the analog lab assistant interface.

🛠️ I developed a feature-rich web dashboard as the primary user interface and control panel of the lab assistant, hosted directly by the Arduino App Lab.

🛠️ I incorporated Google Gemini to enable the lab assistant to generate LLM-based lessons about the detected lab equipment.

🛠️ Thanks to the built-in background Linux MPU-MCU communication service (Arduino Router), I built the interconnected interface background in Python, handling the data transfer between the web dashboard, the analog interface (MCU), and the Qualcomm QRB (MPU) running the essential App Lab Bricks (Docker containers); database registration, inference running, web dashboard (UI) hosting, etc.

🤖 The finalized ancillary lab assistant allows users to:

🔬 create web dashboard accounts and sign in via fingerprint authentication,

🔬 monitor real-time lab sensor readings via the analog interface or the web dashboard,

🔬 inspect LLM-generated sensor guides and experiment tips for each lab sensor via the web dashboard,

🔬 capitalizing on the built-in browser text-to-speech (TTS) module, listen to LLM-generated sensor guides and experiment tips,

🔬 identify lab equipment via the provided Edge Impulse FOMO object detection model,

🔬 use the predefined equipment questions or enter a specific one to generate AI lessons through Google Gemini,

🔬 access the list of LLM-generated lessons assigned to your account on the web dashboard anytime,

🔬 study LLM-generated lessons by reading or listen to them via the TTS module.

🎁 📢 Although I did not utilize any service or product specifically sponsored for this project, I send my kind regards to DFRobot and Seeed Studio since some of the sensors were sponsored by them for my previous projects :)

Development process, thinking in terms of creating an Arduino App Lab application, and final results

As mentioned in the introduction, the development process of this AI-driven lab assistant differs quite a bit from my previous AIoT projects since I built a single application within the confines of the Arduino App Lab development environment, specifically constructed to capitalize on the dual-brain (MPU-MCU) nature of UNO Q, even though I developed a feature-rich web dashboard and analog lab assistant interface individually. Arduino App Lab provides built-in Bricks (Docker containers) for adding various fundamental attributes to an App Lab application, such as web UI hosting, inference running for custom models, etc., and manages all of the operations of the included Bricks while executing the completed application. Thus, although I still utilized specific programming languages to develop the different aspects of the lab assistant App Lab application, Arduino for programming the STM32 microcontroller (MCU), Python for the application backend (Qualcomm MPU), and HTML, CSS, JavaScript for the web dashboard, as a whole, I built a single application that the App Lab runs and manages.

I think the most prominent feature of UNO Q, with the support of the App Lab, is the built-in RPC (Remote Procedure Call) managed by the Arduino Router background Linux service, which enables developers to borrow and run functions between Qualcomm MPU and STM32 MCU interchangeably. App Lab also provides a built-in web socket to establish data transfer between the web dashboard and the Python backend. In this regard, it makes the communication between the STM32 MCU and the web dashboard effortless through the same Python backend. In light of these built-in features, I decided to build the second iteration of my AI-driven lab assistant with UNO Q.

Generally, I thoroughly explain the setup process of my interconnected software and hardware applications according to the employed development boards, modules, environments, third-party APIs, etc. However, since I only utilized the built-in Arduino App Lab attributes to enable anyone with a UNO Q to replicate and examine this project effortlessly, I highly recommend inspecting the official Arduino UNO Q specifications and tutorials.

To be able to use UNO Q as a single-board computer and connect a USB camera, I needed to use a USB-C hub (dongle) with reliable HDMI, USB-A, and USB-C external power ports. Nonetheless, since UNO Q does not have a dedicated GPU, the processing power was too slow to run the App Lab and develop the lab assistant application solely in the SBC mode, especially the web dashboard. Thus, I utilized the SBC mode and the network mode simultaneously, supported by the App Lab, to access UNO Q remotely on any machine connected to the local network. In this regard, I was able to build the lab assistant App Lab application by accessing the full capacity of the Arduino App Lab.

#️⃣ Since I needed to capture screenshots for this tutorial while utilizing the SBC mode, I installed a simple program to enable taking screenshots on Debian-based Linux distributions via the terminal.

sudo apt install xfce4-screenshooter

I documented the overall development process for the finalized ancillary lab assistant in the following written tutorial. Even though I exhibited all of the lab assistant features in the tutorial, I highly recommend checking the project demonstration videos that thoroughly showcase the device structure and real-time user experience of the analog assistant interface and the web dashboard.

Step 0: Integration and use cases of Google Gemini

Since I wanted users to generate AI lessons based on questions about specific lab equipment and inspect the LLM-generated lessons via the web dashboard, I decided to fine-tune the large language model responses appropriately to obtain lessons directly in the HTML format. According to my previous experiments with different large language models that I conducted while developing LLM-oriented projects, Google Gemini produced reliable, informative, and concise HTML pages about simple inquiries. Thus, I decided to utilize Google Gemini to enable the ancillary lab assistant to produce AI lessons. Furthermore, Google Gemini has a very low barrier to entry for utilizing its primary chat application and API services.

#️⃣ First, to be able to integrate Google Gemini into my Arduino App Lab application, I opened Google AI Studio and created a new API key specific to this project.

#️⃣ Since the App Lab already provides a Brick to integrate and use cloud LLMs in Python, I only needed to register the produced API key into my custom application. I will explain how to utilize Bricks in detail in the following steps.

#️⃣ Although I enabled users to produce AI lessons freely on different lab equipment based on predefined or specific questions, I decided to make the web dashboard present LLM-generated but curated guides with simple experiment tips about the selected lab sensors. To ensure the consistency between the user-generated AI lessons and the static (default) lab sensor guides with experiment tips, I employed the official Google Gemini chat application to produce dedicated HTML pages for each lab sensor.

❓ Such as: "Create me an HTML page explaining Gravity: Factory Calibrated Electrochemical Alcohol Sensor and the importance, dangers, and usage of alcohol in labs."

#️⃣ Since I am not a talented graphic or logo designer, I also decided to employ Gemini to produce custom logos and CSS animations for the web dashboard. I specifically made Gemini to contain each CSS animation in a separate HTML page, which helped me to manage my primary web dashboard layout and Gemini-generated elements. For each Gemini-generated static lab sensor information page, animation page, and logos (images), I added the gemini moniker to their file names.

- gemini_alcohol_concentration.html

- gemini_fingerprint_waiting.html

- gemini_text_to_speech_stop_logo.png

Step 1: Configuring initial Arduino App Lab settings and determining suitable sensors for the ancillary lab assistant

Since Arduino UNO Q comes with the Arduino App Lab installed out of the box, I did not need to take any additional steps to run the App Lab in the SBC mode other than upgrading the Debian Linux operating system and the App Lab to their latest versions. However, to be able to utilize the network mode to program the lab assistant App Lab application remotely, I downloaded the Arduino App Lab on my workstation.

#️⃣ First, I connected a compatible USB dongle (hub), UGREEN 5-in-1, to the UNO Q in order to upgrade the Debian operating system and the Arduino App Lab.

#️⃣ After downloading the Arduino App Lab on my workstation, I created a new App Lab application to start developing my custom lab assistant application.

#️⃣ After successfully creating my lab assistant App Lab application, I meticulously searched for the most feasible lab sensors to enhance my ancillary lab assistant. As I have worked on multiple experimental research projects, I have had the chance to choose lab sensors from my ever-growing arsenal.

#️⃣ After selecting suitable sensors from my collection, I also added new ones to enable the ancillary lab assistant to provide a wide range of lab experiment options.

- Gravity: Electrochemical Alcohol Sensor | Guide

- Gravity: 1Kg Weight Sensor Kit - HX711 | Guide

- Gravity: Geiger Counter Module | Guide

- Gravity: Electrochemical Nitrogen Dioxide Sensor | Guide

- Grove: Integrated Pressure Sensor Kit (MPX5700AP) | Guide

- Grove: Water Atomization Sensor (Ultrasonic) | Guide

- Gravity: GNSS Positioning Module | Guide

#️⃣ As mentioned earlier, I decided to build an analog assistant interface to enable observing real-time sensor readings manually and activating web dashboard accounts via fingerprint authentication. Thus, I also connected these components to the UNO Q.

- DFRobot Capacitive Fingerprint Sensor (UART) | Guide

- Waveshare 1.28" Round LCD Display Module (GC9A01) | Guide

#️⃣ Although UNO Q comes with 3.3V and 5V power lines, since it would not be feasible to supply power to all these current-heavy components directly from the UNO Q, I utilized a buck-boost converter to supply all components requiring 3.3V via an external power source.

#️⃣ Since the pinout and dimensions of the Arduino UNO Q are equivalent to the standard Arduino Uno's, connecting all components was straightforward.

// Connections // Arduino UNO Q : // Capacitive Fingerprint Sensor (UART) // 3.3V ------------------------ VIN // GND ------------------------ GND // D1 / USART1_TX ----------------- RX // D0 / USART1_RX ----------------- TX // 3.3V ------------------------ 3V3 // Gravity: Electrochemical Alcohol Sensor // 3.3V ------------------------ + // GND ------------------------ - // SCL ------------------------ C/R // SDA ------------------------ D/T // Gravity: 1Kg Weight Sensor Kit - HX711 // 3.3V ------------------------ VCC // GND ------------------------ GND // SCL ------------------------ SCL // SDA ------------------------ SDA // Gravity: Geiger Counter Module - Ionizing Radiation Detector // GND ------------------------ - // 3.3V ------------------------ + // D2 ------------------------ D // Gravity: Electrochemical Nitrogen Dioxide Sensor - NO2 // 3.3V ------------------------ + // GND ------------------------ - // SCL ------------------------ C/R // SDA ------------------------ D/T // Grove - Integrated Pressure Sensor Kit - MPX5700AP // GND ------------------------ GND // 3.3V ------------------------ VCC // A0 ------------------------ SIG // Grove - Water Atomization Sensor - Ultrasonic // GND ------------------------ GND // 5V ------------------------ VCC // D4 ------------------------ EN // Gravity: GNSS Positioning Module // 3.3V ------------------------ + // GND ------------------------ - // SCL ------------------------ C/R // SDA ------------------------ D/T // Waveshare - 1.28inch Round LCD Display Module // 3.3V ------------------------ VCC // GND ------------------------ GND // D11 ------------------------ DIN // D13 ------------------------ CLK // D10 ------------------------ CS // D7 ------------------------ DC // D8 ------------------------ RST // D9 ------------------------ BL // Control Button (A) // A1 ------------------------ + // Control Button (B) // A2 ------------------------ + // Control Button (C) // A3 ------------------------ + // Control Button (D) // A4 ------------------------ + // 5mm Common Anode RGB LED // D3 ------------------------ R // D5 ------------------------ G // D6 ------------------------ B

#️⃣ To enable the ancillary lab assistant to identify specific lab equipment via object detection, I attached a USB camera (PK-910H) to the UNO Q through the USB dongle (hub).

#️⃣ As I already had a spare one, I used an official Raspberry Pi 5.1V / 3.0A USB-C power supply to power the UNO Q through the USB hub. Nonetheless, you can use any power supply compatible with the UNO Q specifications.

Step 1.1: Adding and revising the sketch libraries to make the target sensors compatible with the UNO Q

Even though Arduino UNO Q shares the same layout with the standard Arduino Uno, the MCU structure (STM32) and the bootloader (which runs on the Zephyr RTOS) are completely different. Thus, I needed to add component libraries that were not present in the provided App Lab library collection and heavily modify most of the component libraries to make them compatible with the UNO Q structure.

#️⃣ First, I added sketch libraries available in the provided App Lab library collection, including the MessagePack (msgpack) library, which is essential to utilize the Arduino Router service on the MCU.

#️⃣ Then, I created a folder named customLibs under the lab assistant application's sketch folder and installed libraries that were not present in the provided library collection.

#️⃣ To enable the App Lab to access the custom libraries, I edited the sketch.yaml file accordingly via the default command-line text editor (GNU nano).

- dir: customLibs/<lib_name>

- dir: customLibs/DFRobot_MultiGasSensor

#️⃣ There were a plethora of sketch library incompatibilities and errors, especially with lab sensor libraries. For each error, I pinpointed the faulty code and deliberately modified files via the GNU nano text editor.

#️⃣ As the Arduino App Lab does not share sketch libraries like the Arduino IDE, since each App Lab application is a single Docker project, I needed to target the assigned library paths for the lab assistant App Lab application while editing files installed directly by the App Lab. In the case of the App Lab creating folder names with spaces, I enclosed the path with quotes (") on the terminal to access the required files. Conversely, revising the custom libraries I added under the customLibs folder via the terminal was straightforward.

sudo nano /home/arduino/.arduino15/internal/<target_library>/<target_file>

sudo nano /home/arduino/.arduino15/internal/DFRobot_HX711_I2C_1.0.0_d8304db78735c6a3/DFRobot_HX711_I2C/DFRobot_HX711_I2C.h

#️⃣ After installing different library versions, modifying them, and making sure each component works as intended, I copied all the libraries I modified from the internal App Lab sketch library folder and added them to my custom libraries under the customLibs folder.

- dir: customLibs/modded_Adafruit_GC9A01A_1.1.1

- dir: customLibs/modded_DFRobot_Alcohol_1.0.0

#️⃣ I decided to save nearly all sketch libraries locally to ensure that the lab assistant App Lab application works without any additional code or library modification once imported via the provided ZIP folder. You can inspect the project GitHub repository to inspect all code files and download the ZIP folder.

Step 2: Programming the Arduino sketch executed by the STM32U585 (MCU)

📁 logo.h

To prepare monochromatic images in order to display custom logos on the round LCD module (GC9A01), I followed the process below.

#️⃣ First, I converted monochromatic bitmaps to compatible C data arrays by utilizing LCD Assistant.

#️⃣ Based on the round display type, I selected the Horizontal byte orientation.

#️⃣ After converting all logos successfully, I created this header file — logo.h — to store them.

📁 color_theme.h

#️⃣ In this header file, I assigned global HEX variables (compatible with the Adafruit GFX library) to create the primary color theme for the analog lab assistant interface.

📁 sketch.ino

⭐ Include the required sketch libraries.

#include <Arduino_RouterBridge.h> #include <DFRobot_ID809.h> #include "DFRobot_Alcohol.h" #include <DFRobot_HX711_I2C.h> #include "DFRobot_MultiGasSensor.h" #include <DFRobot_Geiger.h> #include "DFRobot_GNSS.h" #include "SPI.h" #include "Adafruit_GFX.h" #include "Adafruit_GC9A01A.h"

⭐ Import custom logos (C data arrays) and the provided HEX color variables.

#include "logo.h" // Import the custom color theme. #include "color_theme.h"

⭐ Define the round LCD (GC9A01) screen configurations and declare the GC9A01 class instance.

#define SCREEN_WIDTH 240 #define SCREEN_HEIGHT 240 #define TFT_DC D7 #define TFT_CS D10 Adafruit_GC9A01A tft(TFT_CS, TFT_DC);

⭐ Define the configurations and the class instance for the electrochemical alcohol sensor. This alcohol sensor has a collection range between 1 - 100 and generates the final result as the average of the given collection range of the latest data collection array items. Its default I2C address can be altered via the onboard DIP switch.

/*

1) The available collection range is between 1 and 100. The sensor generates the final result as the average of the given number (collection range) of the latest data collection array items.

2) The available I2C addresses are as follows. Please use the onboard the DIP switch to change the default I2C address.

| A0 | A1 |

ALCOHOL_ADDRESS_0 | 0 | 0 | 0x72

ALCOHOL_ADDRESS_1 | 1 | 0 | 0x73

ALCOHOL_ADDRESS_2 | 0 | 1 | 0x74

ALCOHOL_ADDRESS_3 | 1 | 1 | 0x75 (Default)

*/

#define alcohol_collect_num 10

DFRobot_Alcohol_I2C alcohol_sensor(&Wire, ALCOHOL_ADDRESS_3);

⭐ Define the configurations and the class instance for the electrochemical nitrogen dioxide (NO2) sensor. Its default I2C address can be altered via the onboard DIP switch.

/*

1) The available I2C addresses are as follows. Please use the onboard the DIP switch to change the default I2C address.

| A0 | A1 |

| 0 | 0 | 0x74 (Default)

| 0 | 1 | 0x75

| 1 | 0 | 0x76

| 1 | 1 | 0x77

*/

DFRobot_GAS_I2C no2_gas_sensor(&Wire, 0x74);

⭐ Define the configurations and the class instance for the HX711 weight sensor. Its default I2C address can be altered via the onboard DIP switch.

/*

1) The available I2C addresses are as follows. Please use the onboard the DIP switch to change the default I2C address.

| A0 | A1 |

| 0 | 0 | 0x64 (Default)

| 1 | 0 | 0x65

| 0 | 1 | 0x66

| 1 | 1 | 0x67

*/

DFRobot_HX711_I2C weight_sensor(&Wire,/*addr=*/0x64);

⭐ Define the configurations and the class instance for the GNSS positioning module. Once the module acquires a strong signal to obtain a full set of satellite positioning data, its onboard LED should turn from red to green.

/* 1) The default I2C address is 0x20. 2) Once the module acquires a GPS signal successfully, the onboard LED should turn from red to green. */ DFRobot_GNSS_I2C gnss_sensor(&Wire ,GNSS_DEVICE_ADDR);

⭐ If you need to print sensor readings and system notifications on the App Lab monitor for debugging, change this value to true after initiating the built-in Monitor.

⭐ However, do not use the Monitor outside of debugging since the sketch functions provided to the Bridge (RTC) would not be registered by the Router service.

volatile boolean __debug_monitor = false;

⭐ Declare the necessary parameters for saving sensor readings by creating a struct.

struct sensor_readings {

unsigned long latest_read_time, read_offset = 1000000;

float pressure;

float alcohol_concentration;

float weight;

struct _no2{ float concentration; int board_temp; }; struct _no2 _no2;

struct _geiger{ int cpm, nsvh, usvh; }; struct _geiger _geiger;

struct _gnss{ String date, utc; char lat_dir, lon_dir; double latitude, longitude, altitude, sog, cog; }; struct _gnss _gnss;

String water_atomization = "OFF";

};

⭐ Initiate the Arduino Router (Bridge) background Linux service to borrow and run functions between Qualcomm MPU and STM32 MCU interchangeably.

Bridge.begin();

⭐ Uncomment this line if you need to initiate the integrated App Lab monitor for debugging.

//Monitor.begin();

⭐ Provide the interface_web_control sketch function to the Router (Bridge) service to enable the Qualcomm MPU to access and execute it directly on the STM32 MCU.

Bridge.provide("interface_web_control", interface_web_control);

⭐ Initiate the hardware serial port to communicate with the capacitive fingerprint sensor (UART).

Serial.begin(115200); delay(1000);

⭐ Initiate sensors and check their connection status to notify the user accordingly on the round GC9A01 screen.

⭐ After successfully setting up all sensors, define the current time (microseconds) to perform precise subsequent readings for each sensor.

sensor_readings.latest_read_time = micros();

⭐ In the obtain_sensor_readings function:

⭐ According to their required data generation (reading) spans set as 1-second intervals, calculate and save sensor readings (variables) for each lab sensor without suspending code flow.

⭐ After successfully collecting all sensor variables (every six seconds), invoke the borrowed update_sensor_on_app Python function to pass the collected sensor variables to the Python background through the Arduino Router service (MessagePack RPC).

⭐ Finally, restart the sensor reading timer.

void obtain_sensor_readings(unsigned long read_offset){

if(micros() - sensor_readings.latest_read_time >= read_offset){

pressure_sensor.raw_value = 0;

for(int x = 0; x < pressure_sensor.collection_range; x++) pressure_sensor.raw_value = pressure_sensor.raw_value + analogRead(pressure_sensor.c_pin);

sensor_readings.pressure = (pressure_sensor.raw_value - pressure_sensor.offset) * 700.0 / (pressure_sensor.full_scale - pressure_sensor.offset);

//if(__debug_monitor){Monitor.print("Pressure sensor raw value (A/D) is "); Monitor.print(pressure_sensor.raw_value); Monitor.print("\nEstimated pressure is "); Monitor.print(sensor_readings.pressure); Monitor.println(" kPa\n");}

}

if(micros() - sensor_readings.latest_read_time >= 2*read_offset){

sensor_readings.alcohol_concentration = alcohol_sensor.readAlcoholData(alcohol_collect_num);

if(sensor_readings.alcohol_concentration == ERROR) sensor_readings.alcohol_concentration = -1;

//if(__debug_monitor){ Monitor.print("Alcohol concentration is "); Monitor.print(sensor_readings.alcohol_concentration); Monitor.println(" PPM.\n"); }

}

if(micros() - sensor_readings.latest_read_time >= 3*read_offset){

sensor_readings.weight = weight_sensor.readWeight();

if(sensor_readings.weight < 0.5) sensor_readings.weight = 0;

//if(__debug_monitor){ Monitor.print("Estimated weight is "); Monitor.print(sensor_readings.weight); Monitor.println(" g.\n"); }

sensor_readings._geiger.cpm = geiger.getCPM();

sensor_readings._geiger.nsvh = geiger.getnSvh();

sensor_readings._geiger.usvh = geiger.getuSvh();

//if(__debug_monitor){Monitor.print("CPM: "); Monitor.println(sensor_readings._geiger.cpm); Monitor.print("nSv/h: "); Monitor.println(sensor_readings._geiger.nsvh); Monitor.print("μSv/h "); Monitor.println(sensor_readings._geiger.usvh);}

}

if(micros() - sensor_readings.latest_read_time >= 4*read_offset){

sensor_readings._no2.concentration = no2_gas_sensor.readGasConcentrationPPM();

sensor_readings._no2.board_temp = no2_gas_sensor.readTempC();

//if(__debug_monitor){ Monitor.print("NO2 concentration is: "); Monitor.print(sensor_readings._no2.concentration); Monitor.println(" PPM\n"); Monitor.print("NO2 sensor board temperature is: "); Monitor.print(sensor_readings._no2.board_temp); Monitor.println(" ℃\n"); }

}

if(micros() - sensor_readings.latest_read_time >= 5*read_offset){

sTim_t utc = gnss_sensor.getUTC();

sTim_t date = gnss_sensor.getDate();

sLonLat_t lat = gnss_sensor.getLat();

sLonLat_t lon = gnss_sensor.getLon();

sensor_readings._gnss.date = String(date.year) + "/" + String(date.month) + "/" + String(date.date); sensor_readings._gnss.utc = String(utc.hour) + "_" + String(utc.minute) + "_" + String(utc.second);

sensor_readings._gnss.lat_dir = (char)lat.latDirection; sensor_readings._gnss.lon_dir = (char)lon.lonDirection;

sensor_readings._gnss.latitude = lat.latitudeDegree; sensor_readings._gnss.longitude = lon.lonitudeDegree;

sensor_readings._gnss.altitude = gnss_sensor.getAlt();

sensor_readings._gnss.sog = gnss_sensor.getSog(); // Speed Over Ground

sensor_readings._gnss.cog = gnss_sensor.getCog(); // Course Over Ground

//if(__debug_monitor){ Monitor.print("GNSS (latitude): "); Monitor.print(sensor_readings._gnss.latitude); Monitor.print("GNSS (longitude): "); Monitor.print(sensor_readings._gnss.longitude); Monitor.print("GNSS (altitude): "); Monitor.print(sensor_readings._gnss.altitude); }

}

if(micros() - sensor_readings.latest_read_time >= 6*read_offset){

// After collecting all sensor variables, invoke the borrowed Python function via the Arduino Router using MessagePack RPC.

Bridge.call("update_sensor_on_app", sensor_readings.pressure, sensor_readings.alcohol_concentration, sensor_readings.weight, sensor_readings._no2.concentration, sensor_readings._no2.board_temp, sensor_readings._geiger.cpm, sensor_readings._geiger.nsvh, sensor_readings._geiger.usvh, sensor_readings._gnss.date, sensor_readings._gnss.utc, sensor_readings._gnss.lat_dir, sensor_readings._gnss.lon_dir, sensor_readings._gnss.latitude, sensor_readings._gnss.longitude, sensor_readings._gnss.altitude, sensor_readings._gnss.sog, sensor_readings._gnss.cog);

// Restart the sensor reading timer.

sensor_readings.latest_read_time = micros();

}

}

⭐ In the show_sensor_screen function:

⭐ According to the provided sensor information, display the lab sensor data on the round GC9A01 screen.

⭐ By checking the latest sensor screen update, avoid flickering due to drawing the same interface consecutively.

void show_sensor_screen(String title, String title_exp, String sensor_value, String sensor_unit, int _theme){

int l_1_s = 6, l_2_s = 14, l_sp = 5;

int divider_w = SCREEN_WIDTH, divider_h = SCREEN_HEIGHT/4;

int title_w = (divider_w/5)*3, title_h = (divider_h/3)*2;

int logo_r = 40;

int panel_w = SCREEN_WIDTH-logo_r-(4*l_sp), panel_h = (2*logo_r)-(4*l_sp);

int inner_panel_w = panel_w-logo_r, inner_panel_h = panel_h-(2*l_sp);

int t_x_s = (logo_r+(2*l_sp)+logo_r-l_sp) + inner_panel_w/2;

int t_h_s = (SCREEN_HEIGHT/2)+(1.5*l_sp)-(l_2_s/2);

if(!shown_screen_sensor){

adjustColor(1,0,1);

tft.fillScreen(Q_teal);

tft.fillRect(0, 0, divider_w, divider_h, Q_grey);

tft.fillRoundRect((divider_w-title_w)/2, (divider_h/3)*2, title_w, title_h, 5, Q_golden);

tft.setTextSize(2); tft.setTextColor(Q_light_grey);

tft.setCursor((SCREEN_WIDTH-(title.length()*l_2_s))/2, ((divider_h/3)*2)+l_sp);

tft.print(title);

tft.setTextSize(1);

tft.setCursor((SCREEN_WIDTH-(title_exp.length()*l_1_s))/2, ((divider_h/3)*2)+title_h-l_1_s-l_sp);

tft.print(title_exp);

tft.fillCircle(logo_r+(2*l_sp), (SCREEN_HEIGHT/2)+(1.5*l_sp), logo_r, Q_primary);

tft.fillRoundRect(logo_r+(2*l_sp), (SCREEN_HEIGHT/2)+(1.5*l_sp)-(panel_h/2), panel_w, panel_h, 5, Q_primary);

tft.drawBitmap(logo_r+(2*l_sp)-(sensor_logo_w[_theme]/2), (SCREEN_HEIGHT/2)+(1.5*l_sp)-(sensor_logo_h[_theme]/2), sensor_logo_bit[_theme], sensor_logo_w[_theme], sensor_logo_h[_theme], Q_white);

tft.fillRect(logo_r+(2*l_sp)+logo_r-l_sp, (SCREEN_HEIGHT/2)+(1.5*l_sp)-(inner_panel_h/2), inner_panel_w, inner_panel_h, Q_cyan);

tft.setTextSize(2); tft.setTextColor(Q_white);

tft.setCursor(t_x_s-((sensor_value.length()*l_2_s)/2), t_h_s);

tft.print(sensor_value);

tft.fillRect(0, SCREEN_HEIGHT-divider_h, divider_w, divider_h, Q_grey);

tft.setTextSize(2); tft.setTextColor(Q_cyan);

tft.setCursor((SCREEN_WIDTH-(sensor_unit.length()*l_2_s))/2, SCREEN_HEIGHT-(divider_h/2)-(l_2_s/2));

tft.print(sensor_unit);

}else{

tft.fillRect(logo_r+(2*l_sp)+logo_r-l_sp, (SCREEN_HEIGHT/2)+(1.5*l_sp)-(inner_panel_h/2), inner_panel_w, inner_panel_h, Q_cyan);

tft.setTextSize(2); tft.setTextColor(Q_white);

tft.setCursor(t_x_s-((sensor_value.length()*l_2_s)/2), t_h_s);

tft.print(sensor_value);

}

// Avoid flickering due to drawing the same interface consecutively.

shown_screen_sensor = true;

}

⭐ In the show_fingerprint_task_screen function:

⭐ According to the requested fingerprint task and its related color theme, show the ongoing fingerprint task information on the round GC9A01 screen.

⭐ By checking the latest fingerprint screen update, avoid flickering due to drawing the same interface consecutively.

void show_fingerprint_task_screen(String title, String title_exp, uint16_t bg_color, uint16_t t_color){

int l_1_s = 6, l_2_s = 14, l_sp = 5;

int divider_w = SCREEN_WIDTH, divider_h = SCREEN_HEIGHT-fingerprint_h-(5*l_sp);

if(!shown_screen_fingerprint){

adjustColor(1,1,1);

tft.fillScreen(Q_primary);

tft.drawBitmap((SCREEN_WIDTH-fingerprint_w)/2, 2*l_sp, fingerprint_bits, fingerprint_w, fingerprint_h, bg_color);

tft.fillRect(0, SCREEN_HEIGHT-divider_h, divider_w, divider_h, bg_color);

tft.setTextSize(2); tft.setTextColor(t_color);

tft.setCursor(((SCREEN_WIDTH-(title.length()*l_2_s))/2)+(2*l_sp), SCREEN_HEIGHT-divider_h+l_sp);

tft.print(title);

tft.setTextSize(1);

tft.setCursor((SCREEN_WIDTH-(title_exp.length()*l_1_s))/2, SCREEN_HEIGHT-(5*l_sp));

tft.print(title_exp);

}

// Avoid flickering due to drawing the same interface consecutively.

shown_screen_fingerprint = true;

}

⭐ In the show_err_screen, notify the user of the provided system error information via the round screen.

void show_err_screen(String title, String title_exp, String err_description){

int l_1_s = 6, l_2_s = 14, l_sp = 5;

int divider_w = SCREEN_WIDTH, divider_h = SCREEN_HEIGHT/4;

int title_w = (divider_w/5)*3, title_h = (divider_h/3)*2;

int logo_r = 36;

tft.fillScreen(Q_teal);

tft.fillRect(0, 0, divider_w, divider_h, Q_red);

tft.fillRoundRect((divider_w-title_w)/2, (divider_h/3)*2, title_w, title_h, 5, Q_golden);

tft.setTextSize(2); tft.setTextColor(Q_light_grey);

tft.setCursor((SCREEN_WIDTH-(title.length()*l_2_s))/2, ((divider_h/3)*2)+l_sp);

tft.print(title);

tft.setTextSize(1);

tft.setCursor((SCREEN_WIDTH-(title_exp.length()*l_1_s))/2, ((divider_h/3)*2)+title_h-l_1_s-l_sp);

tft.print(title_exp);

tft.fillCircle(SCREEN_WIDTH/2, (SCREEN_HEIGHT/2)+(1.5*l_sp), logo_r, Q_red);

tft.drawBitmap((SCREEN_WIDTH-error_w)/2, ((SCREEN_HEIGHT-error_h)/2)+(1.5*l_sp), error_bits, error_w, error_h, Q_white);

tft.fillRect(0, SCREEN_HEIGHT-divider_h, divider_w, divider_h, Q_red);

tft.setTextSize(2); tft.setTextColor(Q_white);

tft.setCursor((SCREEN_WIDTH-(err_description.length()*l_2_s))/2, SCREEN_HEIGHT-(divider_h/2)-(l_2_s/2));

tft.print(err_description);

}

⭐ In the manage_fingerprint_task function:

⭐ If the check_id fingerprint task is requested:

⭐ Wait until the user places a finger onto the capacitive fingerprint sensor. Then, capture a fingerprint scan image.

⭐ Notify the user that the fingerprint image has been captured successfully via the respective task interface displayed by the round screen.

⭐ Wait until the user removes the finger touching the capacitive sensor.

⭐ Then, obtain the ID of the captured fingerprint scan if registered in the sensor's fingerprint library - ID (1-80).

⭐ According to the enrollment status, notify the user by displaying the respective interface on the round screen.

⭐ If the sensor cannot capture a fingerprint scan precisely, notify the user accordingly on the screen.

⭐ Finally, return to the home interface.

⭐ If the register_id fingerprint task is requested:

⭐ Via the built-in class instance, obtain an available fingerprint ID from the sensor's fingerprint library - ID (1-80) - for registering the new fingerprint.

⭐ Up to the given sampling number, capture fingerprint scan images consecutively by following the procedure below.

⭐ Wait until the user places a finger onto the capacitive fingerprint sensor. Then, capture a fingerprint scan image.

⭐ Notify the user that the fingerprint image has been captured successfully via the respective task interface displayed by the round screen.

⭐ Wait until the user removes the finger touching the capacitive sensor.

⭐ Proceed to capturing the subsequent fingerprint scan image.

⭐ If the sensor cannot capture a fingerprint scan image precisely for the given sample number, notify the user accordingly via the round screen and resume capturing a new scan for the same sample number.

⭐ After capturing fingerprint scan images successfully up to the requested sample number, record the new fingerprint to the provided unregistered ID.

⭐ Then, execute the borrowed manage_account_actions_on_stm32 Python function to inform the Python backend of the success of registering the new fingerprint and its given ID. If an error occurs while registering the new fingerprint, notify the Python backend accordingly with the given error codes.

⭐ Finally, return to the home interface.

⭐ If the verify_id fingerprint task is requested:

⭐ Wait until the user places a finger onto the capacitive fingerprint sensor. Then, capture a fingerprint scan image.

⭐ Notify the user that the fingerprint image has been captured successfully via the respective task interface displayed by the round screen.

⭐ Wait until the user removes the finger touching the capacitive sensor.

⭐ Then, obtain the ID of the captured fingerprint scan if registered in the sensor's fingerprint library - ID (1-80).

⭐ If the captured fingerprint scan is registered (enrolled) and its registration (fingerprint) ID corresponds with the requested (user) ID, execute the borrowed manage_account_actions_on_stm32 Python function to inform the Python backend that the current user's web dashboard account should be verified.

⭐ Otherwise, notify the Python backend accordingly and request the user to scan the accurate (registered) finger.

⭐ If the sensor cannot capture a fingerprint scan precisely, notify the user accordingly on the screen and wait until the next successful scan.

⭐ Finally, return to the home interface.

void manage_fingerprint_task(String task, uint8_t requested_id){

uint8_t result = 0;

if(task == "check_id"){

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Check ID", "Please scan finger!", Q_cyan, Q_primary);

// Once the user places a finger onto the capacitive fingerprint sensor, capture the fingerprint image.

if(fingerprint.collectionFingerprint(/*timeout=*/0) != ERR_ID809){

// Then, notify the user that the fingerprint image captured successfully via the respective task interface.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Captured", "Remove finger!", Q_golden, Q_primary);

// Wait until the user removes the captured finger.

while(fingerprint.detectFinger());

// Then, obtain the ID of the captured fingerprint if registered in the sensor's fingerprint library - ID(1-80).

result = fingerprint.search();

if(result != 0){

// If the captured fingerprint is registered (enrolled):

shown_screen_fingerprint = false;

show_fingerprint_task_screen("ID: "+String(result), "Successful!", Q_green, Q_primary);

delay(2000);

// Return to the home interface.

return_home();

}else{

// Otherwise, notify the user accordingly:

shown_screen_fingerprint = false;

show_fingerprint_task_screen("ID: N", "Not registered!", Q_magenta, Q_white);

delay(2000);

// Return to the home interface.

return_home();

}

}else{

// If the sensor cannot capture fingerprints precisely, notify the user accordingly.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Error", "Cannot capture!", Q_red, Q_white);

delay(2000);

// Return to the home interface.

return_home();

}

}

else if(task == "register_id"){

uint8_t register_ID;

int fingerprint_sampling_number = 3, current_sample = 0;

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Register", "Please scan finger!", Q_cyan, Q_primary);

// Obtain an available fingerprint ID from the sensor's fingerprint library - ID(1-80) - for registering the new fingerprint.

register_ID = fingerprint.getEmptyID();

if(register_ID != ERR_ID809){

// Up to the given sampling number, capture fingerprint images consecutively.

while(current_sample < fingerprint_sampling_number){

// Once the user places a finger onto the capacitive fingerprint sensor, capture the fingerprint image.

if(fingerprint.collectionFingerprint(/*timeout=*/0) != ERR_ID809){

// Then, notify the user that the fingerprint image sample captured successfully via the respective task interface.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Captured ["+String(current_sample+1)+"]", "Remove finger!", Q_golden, Q_primary);

// Proceed to capturing the following fingerprint image sample.

current_sample++;

// Wait until the user removes the captured finger.

while(fingerprint.detectFinger());

}else{

// If the sensor cannot capture fingerprint image samples precisely, notify the user accordingly.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Error", "Please reposition!", Q_red, Q_white);

delay(2000);

}

}

// After capturing fingerprint image samples successfully, record the new fingerprint to the provided unregistered ID.

if(fingerprint.storeFingerprint(/*Empty ID = */register_ID) != ERR_ID809){

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Success ["+String(register_ID)+"]", "Registered!", Q_green, Q_primary);

delay(2000);

// Notify the Python backend accordingly via the borrowed function.

Bridge.call("manage_account_actions_on_stm32", "signup", register_ID);

// Return to the home interface.

return_home();

}else{

// If the sensor cannot save the new fingerprint to the provided ID, notify the user accordingly.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Error", "Cannot register!", Q_red, Q_white);

delay(2000);

// Notify the Python backend accordingly via the borrowed function.

Bridge.call("manage_account_actions_on_stm32", "signup", -1);

// Return to the home interface.

return_home();

}

}else{

// If the sensor cannot produce an unregistered ID, notify the user accordingly.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Error", "Cannot find ID!", Q_red, Q_white);

delay(2000);

// Notify the Python backend accordingly via the borrowed function.

Bridge.call("manage_account_actions_on_stm32", "signup", -2);

// Return to the home interface.

return_home();

}

}

else if(task == "verify_id"){

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Verify User", "Please scan finger!", Q_cyan, Q_primary);

// Once the user places a finger onto the capacitive fingerprint sensor, capture the fingerprint image.

if(fingerprint.collectionFingerprint(/*timeout=*/0) != ERR_ID809){

// Then, notify the user that the fingerprint image captured successfully via the respective task interface.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Captured", "Remove finger!", Q_golden, Q_primary);

// Wait until the user removes the captured finger.

while(fingerprint.detectFinger());

// Then, obtain the ID of the captured fingerprint if registered in the sensor's fingerprint library - ID(1-80).

result = fingerprint.search();

if(result != 0 && result == requested_id){

// If the captured fingerprint is registered (enrolled) and its ID corresponds with the requested ID, verify the user to utilize the web application (dashboard).

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Matched!", "User verified!", Q_green, Q_primary);

delay(2000);

// Notify the Python backend accordingly via the borrowed function.

Bridge.call("manage_account_actions_on_stm32", "signin", result);

// Return to the home interface.

return_home();

}else{

// Otherwise, notify the user accordingly and wait until the user scans the accurate fingerprint:

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Try again!", "Not verified!", Q_magenta, Q_white);

delay(2000);

// Notify the Python backend accordingly via the borrowed function.

Bridge.call("manage_account_actions_on_stm32", "signin", -1);

// Return to the home interface.

return_home();

}

}else{

// If the sensor cannot capture fingerprints precisely, notify the user accordingly and wait until the user scans the accurate fingerprint.

shown_screen_fingerprint = false;

show_fingerprint_task_screen("Error", "Cannot capture!", Q_red, Q_white);

delay(2000);

// Notify the Python backend accordingly via the borrowed function.

Bridge.call("manage_account_actions_on_stm32", "signin", -2);

}

}

}

⭐ Once the control button A (++) or the control button B (--) is pressed, update the analog interface states (lab sensor, home, and fingerprint task) and the requested lab sensor data screen (interface) number.

if(!digitalRead(control_button_A)){

current_sensor_screen++;

if(current_sensor_screen >= total_sensor_screen) current_sensor_screen = 0;

shown_screen_sensor = false;

activated_screen_home = false; shown_screen_home = false;

activated_screen_fingerprint = false; shown_screen_fingerprint = false;

delay(500);

}

if(!digitalRead(control_button_B)){

current_sensor_screen--;

if(current_sensor_screen < 0) current_sensor_screen = total_sensor_screen-1;

shown_screen_sensor = false;

activated_screen_home = false; shown_screen_home = false;

activated_screen_fingerprint = false; shown_screen_fingerprint = false;

delay(500);

}

⭐ If the home or the fingerprint task interfaces are not activated, display the requested lab sensor data screen (interface).

if(!activated_screen_home && !activated_screen_fingerprint){

switch(current_sensor_screen){

case 0:

show_sensor_screen("NO2", "Concentration", String(sensor_readings._no2.concentration), "PPM", 0);

break;

case 1:

show_sensor_screen("NO2", "Board Temp.", String(sensor_readings._no2.board_temp), "C", 0);

break;

case 2:

show_sensor_screen("Alcohol", "Concentration", String(sensor_readings.alcohol_concentration), "PPM", 1);

break;

case 3:

show_sensor_screen("Weight", "Estimation", String(sensor_readings.weight), "G (g)", 2);

break;

case 4:

show_sensor_screen("Geiger", "Ionizing", String(sensor_readings._geiger.cpm), "CPM", 3);

break;

case 5:

show_sensor_screen("Geiger", "Ionizing", String(sensor_readings._geiger.nsvh), "nSv/h", 3);

break;

case 6:

show_sensor_screen("Geiger", "Ionizing", String(sensor_readings._geiger.usvh), "uSv/h", 3);

break;

case 7:

show_sensor_screen("Pressure", "Integrated", String(sensor_readings.pressure), "kPa", 4);

break;

case 8:

show_sensor_screen("Water", "Atomization", sensor_readings.water_atomization, "V", 5);

break;

case 9:

show_sensor_screen("GNSS", "Date", sensor_readings._gnss.date, "Y/M/D", 6);

break;

case 10:

show_sensor_screen("GNSS", "UTC", sensor_readings._gnss.utc, "H_M_S", 6);

break;

case 11:

show_sensor_screen("GNSS", "Latitude", String(sensor_readings._gnss.latitude), "Degrees", 6);

break;

case 12:

show_sensor_screen("GNSS", "Longitude", String(sensor_readings._gnss.longitude), "Degrees", 6);

break;

case 13:

show_sensor_screen("GNSS", "Altitude", String(sensor_readings._gnss.altitude), "M (m)", 6);

break;

case 14:

show_sensor_screen("GNSS", "Speed Over Ground", String(sensor_readings._gnss.sog), "SOG", 6);

break;

case 15:

show_sensor_screen("GNSS", "Course Over Ground", String(sensor_readings._gnss.cog), "COG", 6);

break;

}

}

⭐ Once activated, display the home (default) interface.

if(activated_screen_home) show_home_screen();

⭐ Once the control button D is pressed, return to the home interface, which is the default analog interface state.

if(!digitalRead(control_button_D)) return_home();

⭐ Once a fingerprint task is initiated, show its respective interface and perform the requested task until completion.

while(activated_screen_fingerprint){

// Start the requested fingerprint sensor task.

manage_fingerprint_task(ongoing_fingerprint_task, (uint8_t)provided_user_id);

}

⭐ If the control button C is pressed, initiate the check_id fingerprint task manually.

if(!digitalRead(control_button_C)){

shown_screen_sensor = false;

activated_screen_home = false; shown_screen_home = false;

activated_screen_fingerprint = true; shown_screen_fingerprint = false;

ongoing_fingerprint_task = "check_id";

delay(500);

}

⭐ As mentioned, the interface_web_control function is provided to the integrated Arduino Router background Linux service in order to let the Python backend communicate with the STM32 MCU by executing the given sketch function directly.

⭐ In this function, according to the given command:

⭐ Change the water atomization sensor state — ON or OFF.

⭐ Update the analog interface states and the requested lab sensor data screen (interface) number. If requested, return to the home (default) interface instead.

⭐ Update the analog interface states to initiate and perform the requested fingerprint task.

void interface_web_control(String command, int interface_num){

// Update the lab assistant interface (onboard) according to the provided user selection.

if(command == "update_interface" || command == "update_water_on" || command == "update_water_off"){

// Change the water atomization sensor state once requested by the user.

if(command == "update_water_on"){ sensor_readings.water_atomization = "ON"; digitalWrite(water_atomization_pin, HIGH); }

else if(command == "update_water_off"){ sensor_readings.water_atomization = "OFF"; digitalWrite(water_atomization_pin, LOW); }

if(interface_num != -1){

current_sensor_screen = interface_num;

shown_screen_sensor = false;

activated_screen_home = false; shown_screen_home = false;

activated_screen_fingerprint = false; shown_screen_fingerprint = false;

delay(500);

}else{

return_home();

}

}else{

shown_screen_sensor = false;

activated_screen_home = false; shown_screen_home = false;

activated_screen_fingerprint = true; shown_screen_fingerprint = false;

ongoing_fingerprint_task = command;

provided_user_id = interface_num;

delay(500);

}

}

📁 sketch.yaml

#️⃣ As mentioned, this file includes all configurations regarding custom (local) and dependencies (App Lab installed) sketch libraries.

Step 3: Collecting images of different lab equipment to construct a valid data set

As mentioned earlier, this AI-driven ancillary lab assistant is the second iteration of my previous lab assistant project. Therefore, I already had a diverse set of lab equipment to construct my data set. Since I took a different approach and modified equipment image samples by applying specific built-in OpenCV filters in my previous project, I decided to collect fresh image samples and reduce the number of equipment types.

After mulling over different lab equipment options, I decided to construct my data set based on these items:

- Human skeleton model

- Microscope

- Alcohol burner

- Bunsen burner

- Dynamometer

I employed my phone's camera to capture lab equipment image samples, even though I implemented a sample collection option to the web dashboard later.

Step 4: Building an object detection model (FOMO) w/ Edge Impulse Enterprise

Since Edge Impulse provides developer-friendly tools for advanced AI applications and supports almost every development board through its vast model deployment options, I decided to utilize Edge Impulse Enterprise to build my object detection model. Also, Edge Impulse Enterprise incorporates elaborate model architectures for advanced computer vision applications and optimizes the state-of-the-art vision models for edge devices and single-board computers such as Arduino UNO Q.

Among the diverse machine learning algorithms provided by Edge Impulse, I decided to employ FOMO (Faster Objects, More Objects) since it is a groundbreaking algorithm optimized for both highly constrained edge devices and powerful single-board computers.

While labeling the lab equipment image samples, I simply applied the name of the target lab equipment:

- skeleton_model

- microscope

- alcohol_burner

- bunsen_burner

- dynamometer

Plausibly, Edge Impulse Enterprise enables developers with advanced tools to build, optimize, and deploy each available machine learning algorithm as supported firmware for nearly any device you can think of. Furthermore, since Qualcomm has recently purchased Arduino and Edge Impulse, there is an official pipeline to directly import Edge Impulse models into the Arduino App Lab by assigning your Arduino account to Edge Impulse Studio.

To utilize the advanced AI tools provided by Edge Impulse, you can register here.

For further information, you can inspect this FOMO object detection model on Edge Impulse as a public project.

Step 4.1: Uploading and labeling the lab equipment image samples

#️⃣ First, I created a new project on my Edge Impulse Enterprise account.

#️⃣ To employ the bounding box labeling tool for object detection models, I navigated to Dashboard ➡ Project info ➡ Labeling method and selected Bounding boxes (object detection).

#️⃣ To upload training and testing lab equipment image samples as individual files, I opened the Data acquisition section and clicked the Upload data icon.

#️⃣ Then, I navigated to Data acquisition ➡ Labeling queue to access all unlabeled items (training and testing) remaining in the provided image data set.

#️⃣ After drawing bounding boxes around target objects, I clicked the Save labels button to complete labeling an image sample. Then, I repeated this process until all lab equipment image samples retained at least one labeled target object.

Step 4.2: Training the FOMO object detection model

An impulse (an application developed and optimized by Edge Impulse) takes raw data, applies signal processing to extract features, and then utilizes a learning block to classify new data.

For my application, I created the impulse by employing the Image processing block and the Object Detection (Images) learning block.

Image processing block processes the passed raw image input as grayscale or RGB (optional) to produce a reliable features array.

Object Detection (Images) learning block represents the officially supported machine learning algorithms performing object detection.

#️⃣ First, I opened the Impulse design ➡ Create impulse section, set the model image resolution to 320 x 320, and selected the Fit shortest axis resize mode so as to scale (resize) the given image samples precisely. To complete the impulse creation, I clicked Save Impulse.

#️⃣ To modify the raw image features in the applicable format, I navigated to the Impulse design ➡ Image section, set the Color depth parameter as RGB, and clicked Save parameters.

#️⃣ Then, I proceeded to click Generate features to extract the required features for training by applying the Image processing block.

#️⃣ After extracting features successfully, I navigated to the Impulse design ➡ Object detection section and modified the neural network settings and architecture to achieve reliable accuracy and validity.

#️⃣ According to my prolonged experiments, I assigned the final model configurations as follows.

📌 Neural network settings:

- Number of training cycles ➡ 100

- Learning rate ➡ 0.001

- Validation set size ➡ 5%

📌 Neural network architecture:

- FOMO (Faster Objects, More Objects) MobileNetV2 0.35

#️⃣ After training the model with the final configurations, Edge Impulse evaluated the F1 score (accuracy) as 60.0% since I provided a very limited validation set, which does not even include samples for some labels.

Step 4.3: Evaluating the model accuracy and deploying the validated model

#️⃣ First, to obtain the validation score of the trained model based on the provided testing samples, I navigated to the Impulse design ➡ Model testing section and clicked Classify all.

#️⃣ Based on the initial F1 score, I started to rigorously experiment with the confidence score threshold value to pinpoint the optimum range for the real-world conditions.

#️⃣ After experimenting with the Unoptimized (float32) and Quantized (int8) model variants, I obtained the model accuracy (F1 score - precision) up to 70.0% and estimated the sweet spot for the threshold range.

#️⃣ To deploy the validated model optimized for my hardware, I navigated to the Impulse design ➡ Deployment section and searched for UNO Q.

#️⃣ I chose the Quantized (int8) model variant (optimization) to achieve the optimal performance while running the deployed model.

#️⃣ Finally, I clicked Build to deploy the model. However, contrary to the usual deployment procedure, I did not utilize the downloaded EIM binary since the Arduino App Lab provides a pipeline to link Edge Impulse accounts to import deployed models directly. Please refer to the following step to learn how to import deployed models via the provided Brick.

Step 5: Adding and adjusting the necessary Bricks to develop a feature-rich lab assistant application on the App Lab

As mentioned earlier, the Arduino App Lab provides pre-configured services and Docker containers, Bricks, to add various features to a custom App Lab application. Each Brick provides a specific set of capabilities that are executed by the Qualcomm MPU (Linux) and can be accessed by the Python script (backend) of the application via the built-in high-level APIs.

To develop my lab assistant App Lab application, I utilized these Bricks without using any additional third-party APIs or services:

#️⃣ To enable the Cloud LLM Brick to utilize Google Gemini, open its Brick configuration section and register the previously acquired Gemini API key.

#️⃣ To enable the Video Object Detection Brick to utilize my custom Edge Impulse FOMO object detection model, I employed the built-in pipeline to link my Arduino account with Edge Impulse Studio to import my FOMO model directly into the App Lab.

#️⃣ First, I signed in to my Arduino account on the Arduino App Lab.

#️⃣ Then, I opened the Video Object Detection Brick configuration section, clicked Train new AI model, and linked my Arduino account with Edge Impulse Studio to grant the App Lab access to my Edge Impulse account.

#️⃣ On Edge Impulse Studio, I selected the target development device for my project as Arduino UNO Q. Otherwise, the App Lab pipeline cannot access the essential model information to show importable models.

#️⃣ Then, on the App Lab, I installed my custom FOMO object detection model for identifying lab equipment.

#️⃣ After configuring Bricks, the App Lab updates the app.yaml file automatically to apply the requested changes.

Step 6: Programming the Python script (backend) executed by the Qualcomm QRB2210 microprocessor (MPU)

According to the App Lab application structure, this Python script behaves as the application backend and manages all data transfer processes, Brick features, and interconnected services.

📁 main.py

⭐ Include the required system and high-level Brick libraries.

import os from arduino.app_bricks.video_objectdetection import VideoObjectDetection from arduino.app_bricks.cloud_llm import CloudLLM, CloudModel from arduino.app_bricks.web_ui import WebUI from arduino.app_bricks.dbstorage_sqlstore import SQLStore from arduino.app_utils import * from datetime import datetime from time import sleep import re import random import string import cv2

#️⃣ To bundle all the functions to write a more concise script, I used a Python class.

⭐ In the __init__ function:

⭐ Initialize the integrated Cloud LLM Brick to employ the provided Google Gemini API key to get access to gemini-2.5-flash. Also, assign the system prompt to ensure the LLM behaves as a lab assistant and generates AI lessons in the HTML format.

⭐ Initialize the built-in classifier instance of the Video Object Detection Brick, providing a real-time video stream over WebSocket, utilizing the installed Edge Impulse FOMO object detection model to precisely identify lab equipment. I adjusted confidence and debounce (intermission before executing the callback function for the same label) values based on my experiments on Edge Impulse Studio.

⭐ Declare the callback function to activate once the classifier detects lab equipment. In this case, using lambda is the most resource-efficient option to pass a variable to the given function.

⭐ Create a new SQL database via the Database Brick to register the user and LLM-produced lesson information. Then, create the essential database tables. The built-in table creation function checks whether the given table exists to avoid data loss. However, if requested, drop the previously generated tables to start with a clean slate.

⭐ Initiate the built-in WebUI Brick and declare the web dashboard's root folder path, which handles hosting the custom lab assistant web dashboard.

⭐ As the WebUI Brick establishes a WebSocket automatically, it allows the Python script to listen to WebSocket messages from the client (web dashboard) as the server and call assigned functions accordingly to process the transferred message (dictionary).

⭐ Via the WebUI Brick, expose an HTTP GET REST API endpoint to transfer the user account activation status and its associated LLM-generated lesson information to all clients, including the web dashboard. The Brick achieves this by executing the assigned Python function every time the exposed endpoint is called.

⭐ Employ the Arduino Router background Linux service to enable the STM32 MCU to borrow and run the provided functions on the Qualcomm MPU.

def __init__(self, clean_tables=False):

# Initialize the integrated Cloud LLM management module to utilize the provided Google (Gemini) API key to generate AI-based lab lessons.

self.llm_gemini = CloudLLM(

model=CloudModel.GOOGLE_GEMINI,

system_prompt="You are a lab assistant and must generate HTML pages about the given questions by providing extensive information on the requested subject."

)

# Initialize the integrated object detection model classifier instance with video stream (over WebSocket) for the provided Edge Impulse FOMO object detection model to precisely identify lab equipment.

self.edge_impulse_model = VideoObjectDetection(confidence=0.35, debounce_sec=5)

# Define the callback function once the provided model detects an equipment.

self.edge_impulse_model.on_detect_all(lambda detections: self.process_inference_results(detections))

# Declare and create the SQL database to register user and lesson information.

self.db = SQLStore("lab_assistant.db")

# Create the essential database tables. The built-in table creation function checks whether the given table is already exists.

if(clean_tables):

self.db.drop_table("account_info")

self.db.drop_table("lesson_info")

self.db.create_table("account_info", {"user_id": "INT", "firstname": "TEXT", "lastname": "TEXT", "activation": "TEXT"})

self.db.create_table("lesson_info", {"question": "TEXT", "equipment": "TEXT", "date": "TEXT", "user_id": "INT", "lesson_id": "TEXT", "filename": "TEXT"})

# Declare the integrated WebUI Brick class instance to initiate the custom lab assistant web dashboard.

self.web_ui = WebUI(assets_dir_path="/app/lab_web_dashboard")

# Listen WebSocket messages from the client (web dashboard) to obtain the latest updates.

self.web_ui.on_message("interface_web_control", self.interface_web_control_on_app)

self.web_ui.on_message("manage_account_actions", self.manage_account_actions_on_app)

self.web_ui.on_message("save_new_image_sample", self.save_new_image_sample_on_app)

# Expose REST API endpoints (HTTP GET or POST) to transfer current user account and its associated AI-generated lesson information to all clients, including the web dashboard.

self.web_ui.expose_api("GET", "/account_lessons", self.update_web_dashboard_with_database_info)

# Declare the sensor variables array.

self.sensor_values = {

"pressure": 0,

"alcohol_concentration": 0,

"weight": 0,

"no2": {"concentration": 0, "board_temp": 0},

"geiger": {"cpm": 0, "nsvh": 0, "usvh": 0},

"gnss": {"date": "", "utc": "", "lat_dir": "", "lon_dir": "", "latitude": 0, "longitude": 0, "altitude": 0, "sog": 0, "cog": 0}

}

# Declare the essential account information holders.

self.sign_up_account_info = None

# Employ the Arduino Router background Linux service to enable STM32 MCU to borrow and run these functions on Qualcomm MPU.

Bridge.provide("update_sensor_on_app", self.update_sensor_on_app)

Bridge.provide("manage_account_actions_on_stm32", self.manage_account_actions_on_stm32)

⭐ In the process_inference_results function:

⭐ Once the built-in Brick classifier runs an inference with the provided Edge Impulse FOMO object detection model, process the retrieved results to obtain the detected label for the lab equipment.

⭐ Since the classifier returns a dictionary and sorts the detection results by confidence levels (scores), get the first dictionary item as the most accurate detection result.

⭐ If the user account is activated, transfer the processed detection result to the web dashboard via the established WebSocket.

def process_inference_results(self, detections: dict):

# According to my experiments, I noticed that the built-in detection function sorts the detection results while returning them as a dictionary based on confidence levels. Thus, I was able to get the first dictionary item to transfer the most accurate result once multiple items detected.

label, result = next(iter(detections.items()))

confidence = round(result[0]["confidence"], 2)

# If the current user account is activated, transfer the processed detection result to the web dashboard.

current_user = self.db.execute_sql("SELECT * FROM account_info WHERE activation = 'activated';")

if(current_user != None):

self.web_ui.send_message("latest_obj_detection_result", {"label": label, "confidence": confidence})

⭐ In the generate_AI_lesson_w_gemini function:

⭐ By utilizing the built-in Cloud LLM chat pipeline, ask the gemini-2.5-flash LLM to generate a lesson about the provided question in the HTML format.

⭐ Then, derive only the generated HTML page from the retrieved LLM response.

⭐ After obtaining the LLM-generated HTML page successfully, produce the unique 5-digit lesson ID. Then, save the HTML page by adding the account (user) ID, subject (equipment) name, and unique lesson ID to the file name.

#️⃣ Such as: 2_dynamometer_MJue4.html

⭐ Finally, insert the LLM-generated lesson information into the associated database table (SQL) and inform the web dashboard accordingly.

def generate_AI_lesson_w_gemini(self, lesson_info):

retrieved_llm_response = self.llm_gemini.chat("Generate an HTML page on this question: " + lesson_info["question"])

# Derive only the generated HTML page from the retrieved LLM response.

processed_llm_response = re.search(r'(<!DOCTYPE html>.*?</html>)', retrieved_llm_response, re.DOTALL)

if(processed_llm_response):

# If the provided LLM produces the lesson as an HTML page successfully:

generated_lesson_html = processed_llm_response.group(1)

# Generate the unique 5-digit lesson ID.

unique_lesson_id = ''.join(random.choices(string.ascii_letters + string.digits, k=5))

# Get the lesson generation date in the required format.

date = datetime.now().strftime("%m %d, %Y %H:%M:%S")

# Save the LLM-generated lesson as an HTML file.

lesson_filename = str(lesson_info["user_id"]) + "_" + lesson_info["equipment"] + "_" + unique_lesson_id + ".html"

with open("lab_web_dashboard/lessons/"+lesson_filename, "w", encoding="utf-8") as new_lesson:

new_lesson.write(generated_lesson_html)

# Register the generated lesson information to the associated database table.

self.db.execute_sql("INSERT INTO lesson_info (`question`, `equipment`, `date`, `user_id`, `lesson_id`, `filename`) VALUES ('"+lesson_info["question"]+"', '"+lesson_info["equipment"]+"', '"+date+"', "+lesson_info["user_id"]+", '"+unique_lesson_id+"', '"+lesson_filename+"');")

# Notify the web dashboard accordingly.

self.web_ui.send_message("generate_ai_lesson_action", {"response": "Google (Gemini) [gemini-2.5-flash] produced the requested lesson successfully!"})

else:

self.web_ui.send_message("generate_ai_lesson_action", {"response": "🪐 Google (Gemini) [gemini-2.5-flash] LLM could not generate an appropriately-formatted HTML page. Please try again!"})

⭐ In the update_sensor_on_app function:

⭐ This function is provided to the Router (Bridge) service.

⭐ Once the STM32 MCU executes this function to transfer the collected sensor variables, round the variables to prevent overflow, save them to their respective dictionary items, and finally send the processed dictionary (sensor variables) to the web dashboard via WebSocket.

def update_sensor_on_app(self, p, a, w, n_c, n_b, g_c, g_n, g_u, gn_d, gn_u, gn_lt_d, gn_ln_d, gn_lat, gn_lon, gn_alt, gn_sog, gn_cog):

# Record the retrieved sensor variables to the associated array.

self.sensor_values["pressure"] = round(p, 2)

self.sensor_values["alcohol_concentration"] = round(a, 2)

self.sensor_values["weight"] = round(w, 2)

self.sensor_values["no2"]["concentration"] = round(n_c, 2); self.sensor_values["no2"]["board_temp"] = n_b

self.sensor_values["geiger"]["cpm"] = g_c; self.sensor_values["geiger"]["nsvh"] = g_n; self.sensor_values["geiger"]["usvh"] = g_u

self.sensor_values["gnss"]["date"] = gn_d; self.sensor_values["gnss"]["utc"] = gn_u; self.sensor_values["gnss"]["lat_dir"] = gn_lt_d; self.sensor_values["gnss"]["lon_dir"] = gn_ln_d; self.sensor_values["gnss"]["latitude"] = round(gn_lat, 4); self.sensor_values["gnss"]["longitude"] = round(gn_lon, 4); self.sensor_values["gnss"]["altitude"] = gn_alt; self.sensor_values["gnss"]["sog"] = gn_sog; self.sensor_values["gnss"]["cog"] = gn_cog

# Transfer the obtained sensor information to the lab assistant web dashboard via the WebSocket connection.

self.web_ui.send_message("sensor_values", self.sensor_values)

#️⃣ To maintain account generation and verification processes by employing the capacitive fingerprint sensor, I needed to chain operations executed by the Python backend and the STM32 MCU sequentially. To reduce the stress on the Bridge service, I utilized two functions to handle fingerprint authentication actions.

⭐ In the manage_account_actions_on_app function:

⭐ This function is called once the web dashboard requests via WebSocket.

⭐ Initiate the requested fingerprint task (register or verify) on the STM32 microcontroller via the borrowed interface_web_control function.

⭐ Once requested, log out the activated user account by updating the associated SQL database table.

⭐ Once requested, remove the activated user account and the LLM-generated lessons associated with the account by deleting the respective information from the associated SQL database tables.

⭐ Once requested, produce a new AI lesson about the provided question via Google Gemini (gemini-2.5-flash).

def manage_account_actions_on_app(self, sid, data):

com = data["command"]

if(com == "signin_user"):

# Initiate the associated fingerprint sensor task on the STM32 microcontroller via the borrowed function.

Bridge.call("interface_web_control", "verify_id", int(data["given_user_id"]))

sleep(1)

elif(com == "signup_user"):

self.sign_up_account_info = data;

# Initiate the associated fingerprint sensor task on the STM32 microcontroller via the borrowed function.

Bridge.call("interface_web_control", "register_id", -2)

sleep(1)

elif(com == "logout_user"):

self.db.execute_sql("UPDATE account_info SET activation = 'not_activated' WHERE user_id = "+data["current_user_id"]+";")

elif(com == "delete_user"):

self.db.execute_sql("DELETE FROM account_info WHERE user_id = "+data["current_user_id"]+";")

# Also delete all AI-generated lessons associated to this account.

self.db.execute_sql("DELETE FROM lesson_info WHERE user_id = "+data["current_user_id"]+";")

elif(com == "generate_new_ai_lesson"):

self.generate_AI_lesson_w_gemini(data)

⭐ In the manage_account_actions_on_stm32 function:

⭐ This function is provided to the Router (Bridge) service.

⭐ Once the STM32 MCU sends the newly registered fingerprint ID, create a new user account with the previously received user information from the web dashboard. The transferred fingerprint ID is saved as the unique user ID to the associated SQL database table.

⭐ Once the STM32 MCU sends the verified (matched) fingerprint ID, activate the requested account if the verified user ID does not belong to a previously discarded account.

⭐ Inform the web dashboard of ongoing operations via WebSocket.

def manage_account_actions_on_stm32(self, command, provided_user_id):

if(command == "signup"):

if(self.sign_up_account_info == None):

self.web_ui.send_message("signup_action", {"response": "❌ Python backend did not receive the given user information!"})

else:

if(provided_user_id == -1):

self.web_ui.send_message("signup_action", {"response": "❌ Fingerprint sensor cannot register!"})

elif(provided_user_id == -2):

self.web_ui.send_message("signup_action", {"response": "🔍 Fingerprint sensor cannot find an available ID!"})

else:

# Create a new user account with the provided user information and the given fingerprint scan ID as the user ID.

self.db.execute_sql("INSERT INTO account_info (`user_id`, `firstname`, `lastname`, `activation`) VALUES ("+str(provided_user_id)+", '"+self.sign_up_account_info["firstname"]+"', '"+self.sign_up_account_info["lastname"]+"', 'activated');")

self.sign_up_account_info = None

self.web_ui.send_message("signup_action", {"response": "New user account successfully created!"})

elif(command == "signin"):

if(provided_user_id == -1):

self.web_ui.send_message("signin_action", {"response": "🔍 The given user ID was not verified by the fingerprint sensor! Try again!"})

elif(provided_user_id == -2):

self.web_ui.send_message("signin_action", {"response": "❌ Fingerprint sensor cannot capture fingerprints precisely!"})

else:

# Activate the requested account via its verified (matched) user (fingerprint) ID.

account_check = self.db.execute_sql("SELECT * FROM account_info WHERE user_id = "+str(provided_user_id)+";")

if(account_check != None):

self.db.execute_sql("UPDATE account_info SET activation = 'activated' WHERE user_id = "+str(provided_user_id)+";")

self.web_ui.send_message("signin_action", {"response": "Account activated successfully!"})

else:

self.web_ui.send_message("signin_action", {"response": "✍ Given fingerprint belongs to a previously removed account! Please register a new account!"})

⭐ In the update_web_dashboard_with_database_info function:

⭐ Since this function runs once the associated exposed REST API endpoint is called, it serves to dynamically update the web dashboard via the Python backend.

⭐ According to the account activation status and the number of LLM-generated lessons, produce HTML elements.

⭐ If there are LLM-generated lessons associated with the activated account, sort the retrieved lessons array based on their creation dates to produce an ordered list from latest to earliest. Then, based on the sorted lesson array, proceed to generate HTML lesson information cards.

⭐ Finally, depending on the account activation status, return the retrieved account information and generated HTML content.

def update_web_dashboard_with_database_info(self):